MV-3000: DOE using MotionView - HyperStudy

Tutorial Level: Intermediate

- Use HyperStudy to set-up a DOE study of a MotionView model

- Perform DOE study in the MotionView – HyperStudy environment

- Create approximation (using the DOE results) which can be subsequently used to perform optimization of the MotionView model

Theory

HyperStudy allows you to perform Design of Experiments (DOE), optimization, and stochastic studies in a CAE environment. The objective of a DOE, or Design of Experiments, study is to understand how changes to the parameters (design variables) of a model influence its performance (response).

After a DOE study is complete, approximation can be created from the results of the DOE study. The approximation is in the form of a polynomial equation of an output as a function of all input variables. This is called as the regression equation.

The regression equation can then be used to perform Optimization.

HyperStudy can be used to study different aspects of a design under various conditions, including non-linear behavior.

- Provides a variety of DOE study types, including user-defined

- Facilitates multi-disciplinary DOE, optimization, and stochastic studies

- Provides a variety of sampling techniques and distributions for stochastic studies

- Parameterizes any solver input model via a user-friendly interface

- Uses an extensive expression builder to perform mathematical operations

- Uses a robust optimization engine

- Includes built-in support for post-processing study results

- Includes multiple results formats such as MVW, TXT for study results

Tools

- From the Menu bar, select Applications >HyperStudy

You can then select MDL property data as design variables in a DOE or an optimization exercise. Solver scripts registered in the MotionView Preferences file are available through the HyperStudy interface to conduct sequential solver runs for DOE or optimization.

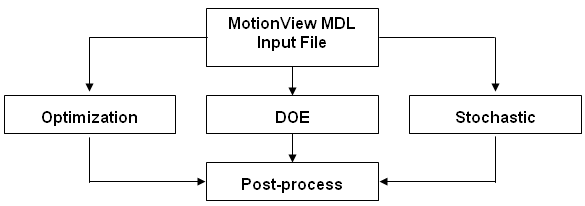

For any study, the HyperStudy process is shown below:

The HyperStudy Process

MotionView MDL files can be directly loaded into HyperStudy. Any solver input file, such as MotionSolve, ADAMS, or Abaqus, can be parameterized and the template file submitted as input for HyperStudy. The parameterized file identifies the design variables to be changed during DOE, optimization, or stochastic studies. The solver runs are carried out accordingly and the results are then post-processed within HyperStudy.

Copy the files hs.mdl and target_toe.csv, located in the mbd_modeling\doe folder, to your <working directory>.

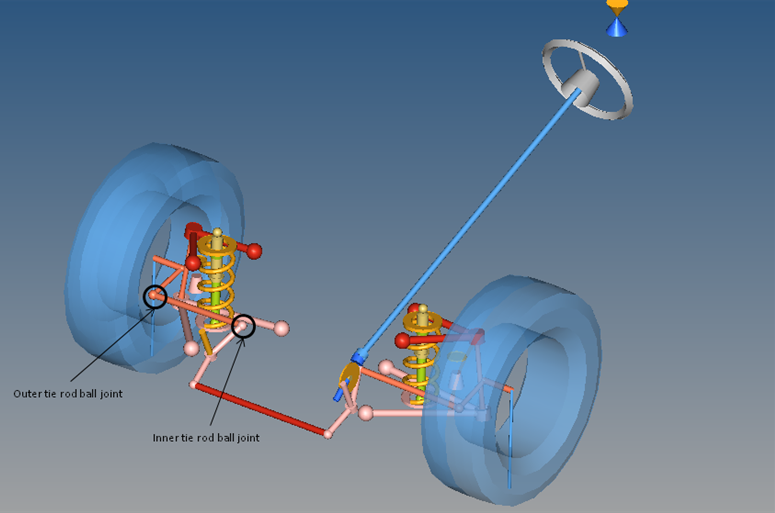

In the following steps, you will create a study to carry out subsequent DOE study on a front SLA suspension model.

While performing a Static Ride Analysis, you will determine the effects of varying the coordinate positions of the origin points of the inner and outer tie-rod joints on the toe-curve.

Step 1: Study Setup

- Start a new MotionView session.

-

Click the Open Model icon,

,

on the Model-Main toolbar.

,

on the Model-Main toolbar.

- Select the file model hs.mdl, located in your <working directory>, and click Open.

- Review the model and the toe-curve output request under Static Ride Analysis.

-

From the Applications menu, select HyperStudy.

HyperStudy is launched. The message "

Establishing connection between MotionView and HyperStudy

" is displayed. -

Start new study using one of the following ways:

- From the Welcome page, click the New Study icon,

.

. - From the toolbar, click the New Study icon,

.

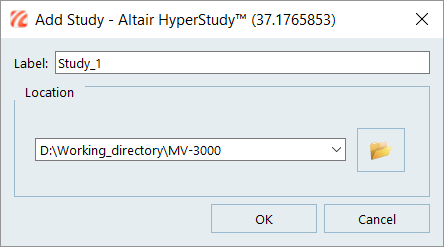

. - From main menu, select . The HyperStudy - Add Study dialog is displayed.

Figure 3.

- From the Welcome page, click the New Study icon,

-

Accept the default label name.

- Under Location, click the file browser and select <working directory>\.

- Click OK.

-

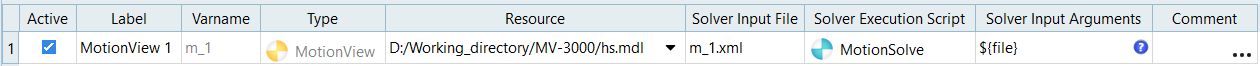

Model definition.

-

Model data.

Please note that following details are automatically filled when you define the model (previous step).

- Under Active, check the box to activate or deactivate the model from study.

- The label of model entered in previous step.

- The variable name of model entered in the previous step.

- The model type selected in previous step.

- Point to the source file (here model file is sourced from MotionView through the MotionView – HyperStudy interfacing).

- Enter a name for the solver input file with the proper extension (for MotionSolve .xml) and select the solver execution script MotionSolve - standalone ( ms).

-

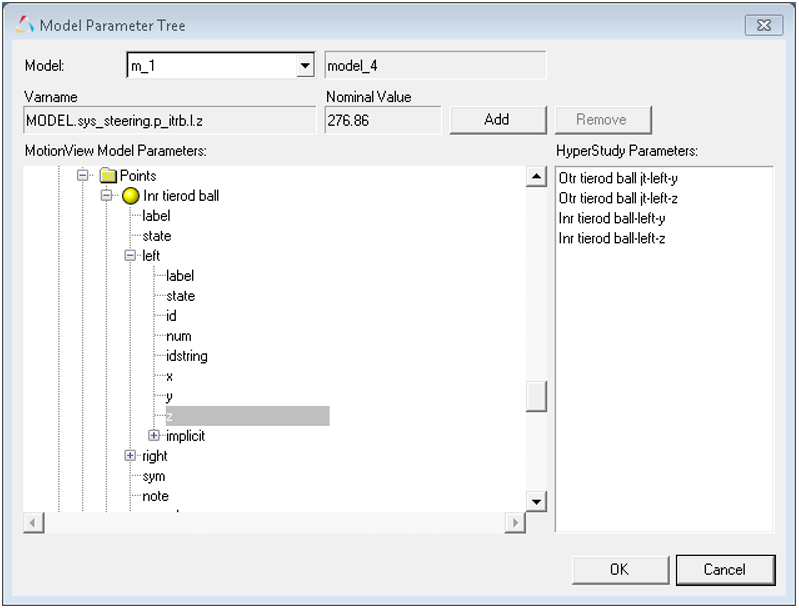

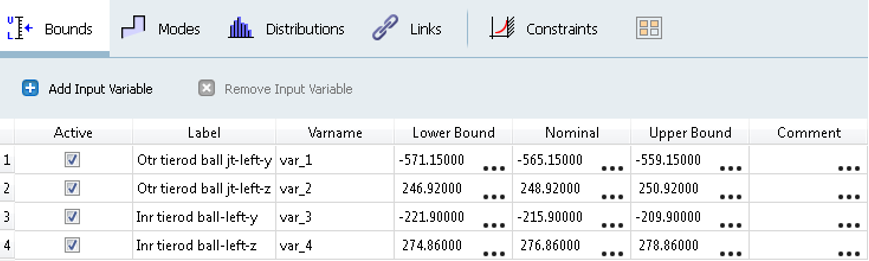

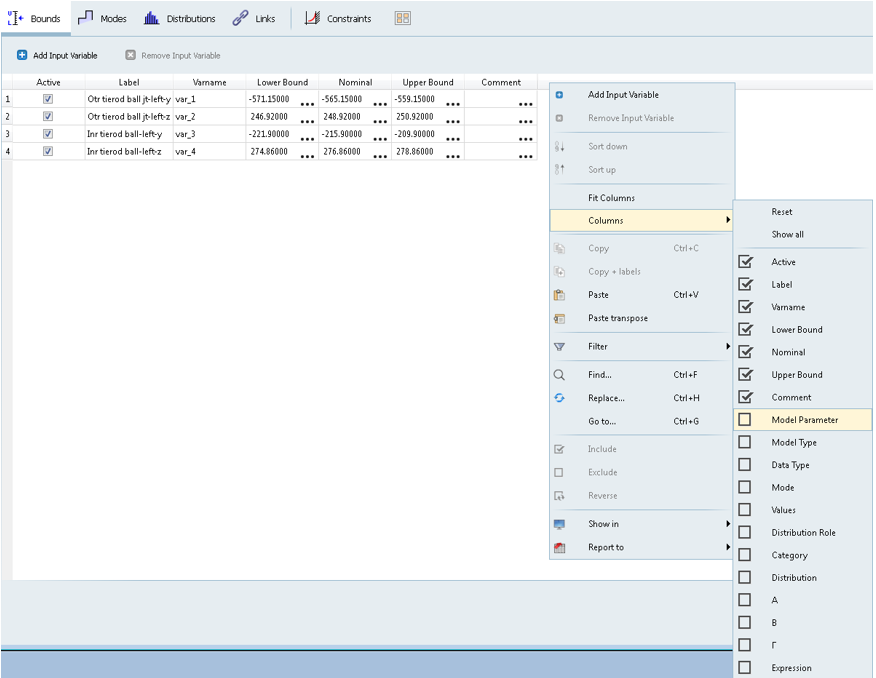

Create design variables.

-

Define design variables.

-

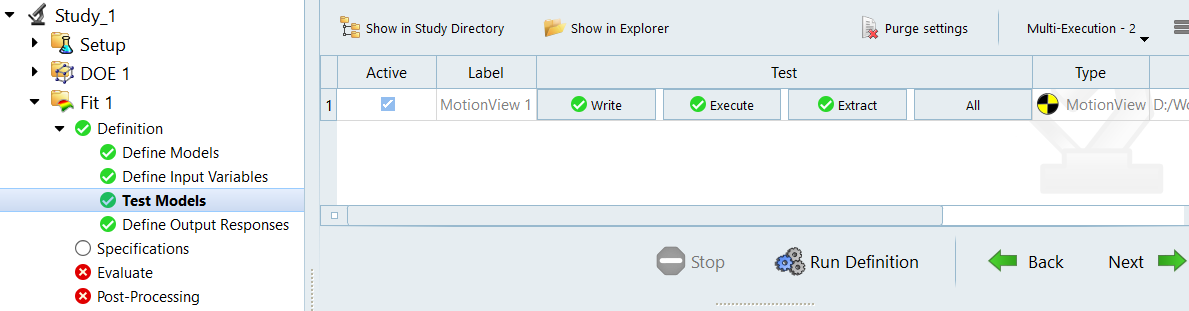

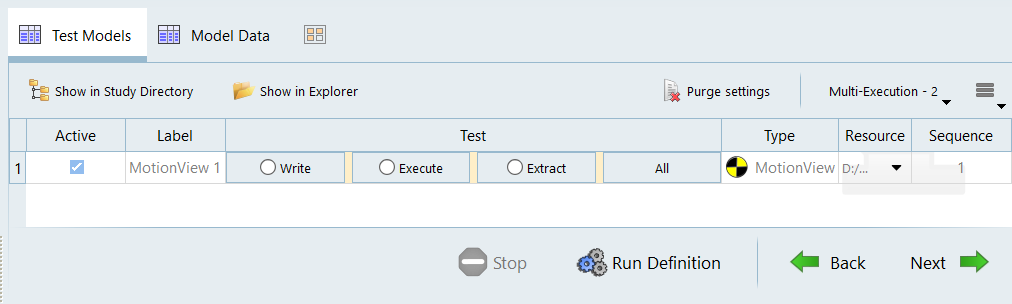

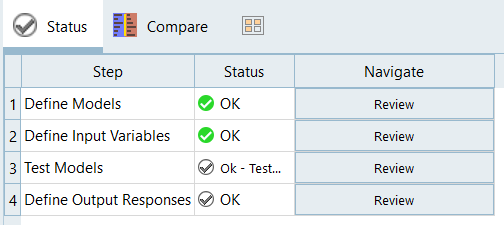

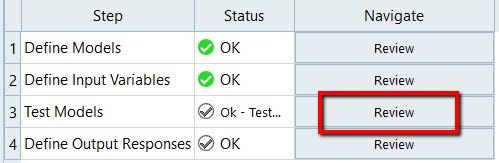

Test models.

This section allows you to perform a test run to verify that HyperStudy is able to execute a run and extract the results from the same by accessing its files.

Figure 8.

-

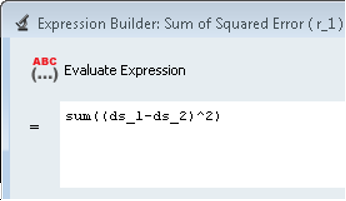

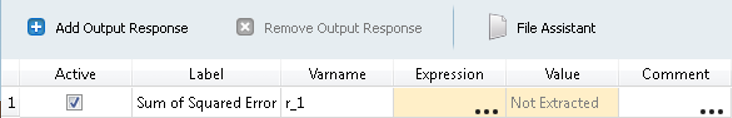

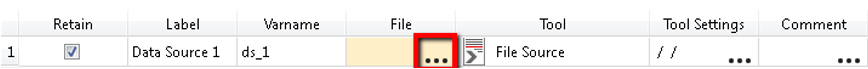

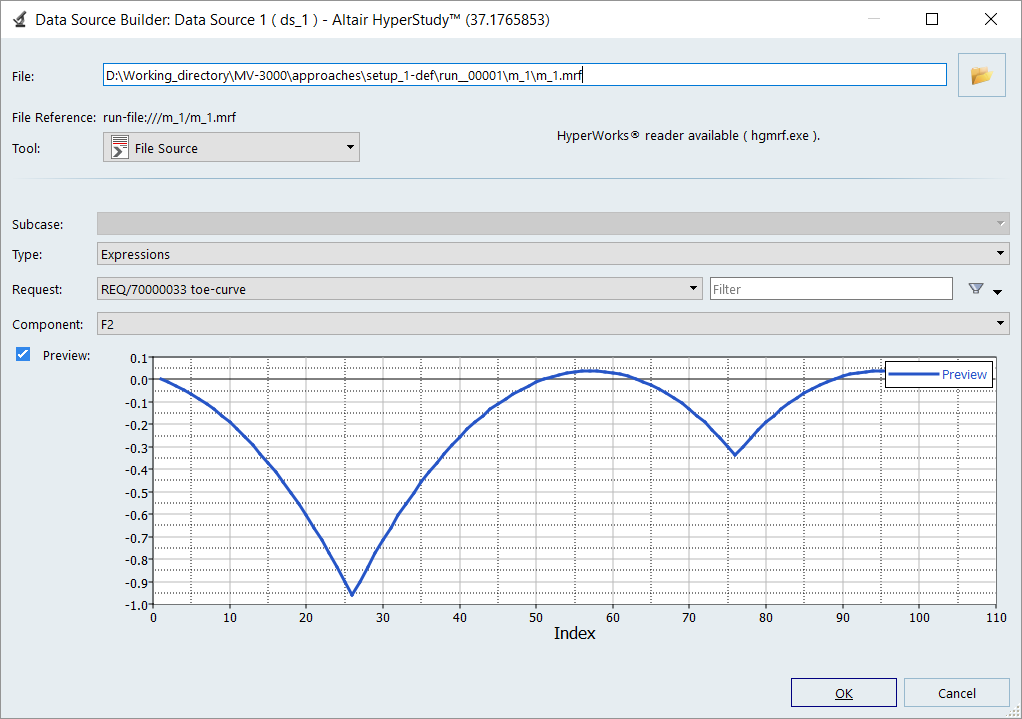

Define Response.

-

Data Source 1

-

Data Source 2

-

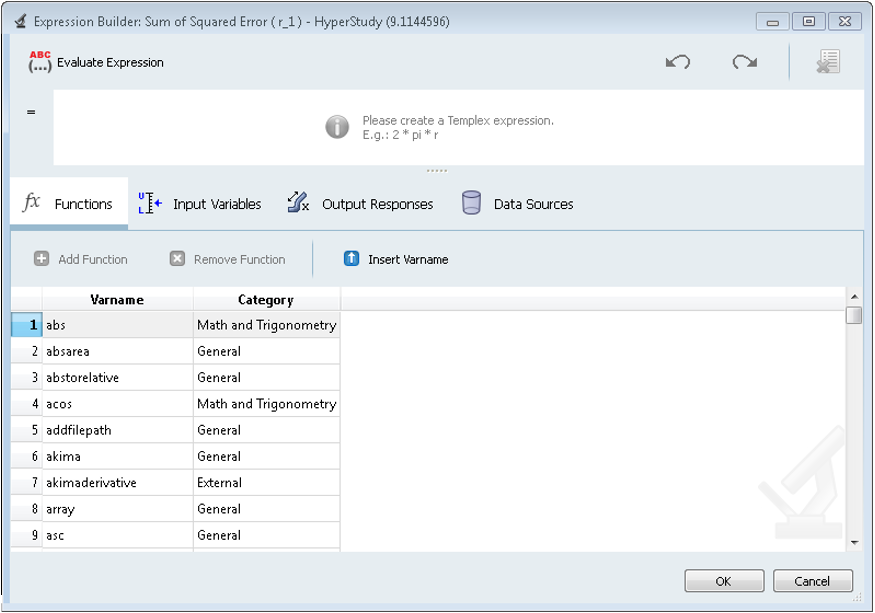

In the Expression field, create the following expression:

sum((ds_1-ds_2)^2)

This expression evaluates the sum of the square of the difference between the “actual toe change” values (from solver run) and the “targeted toe curve” (from imported file). In the next tutorial, MV-3010, we will use HyperStudy to minimize the value of this expression to get the required suspension configuration.

Figure 14.

- Click Evaluate expression to verify that the expression is evaluated correctly. You should get a value of 16.284.

- Click OK to close the Expression builder and hit the Evaluate button.

- From the File menu, select Save As….

- Save this study set-up as setup.hstudy to your <working directory>\.

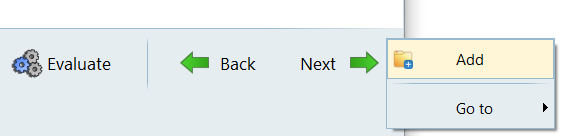

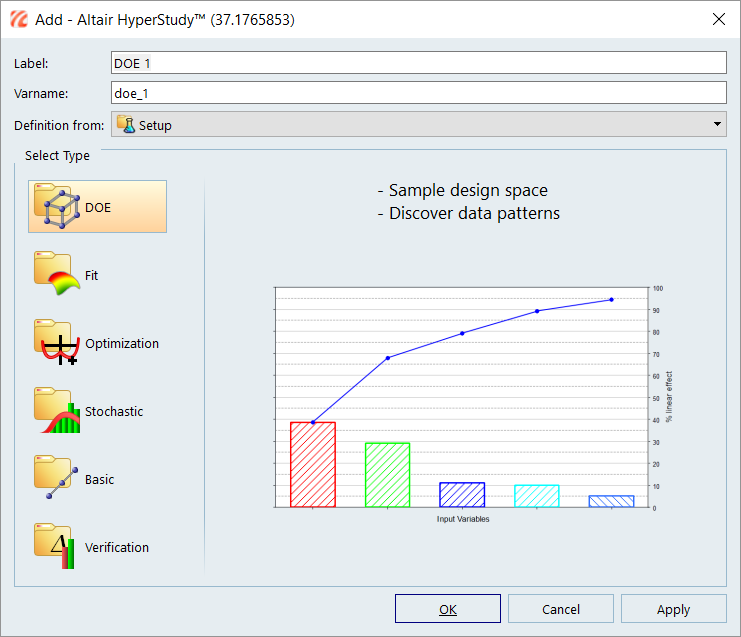

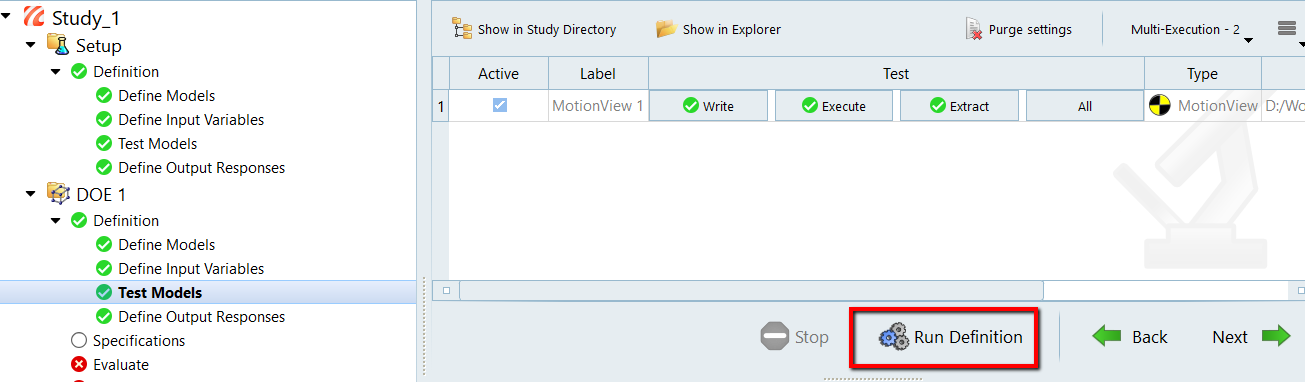

Step 2: DOE Study

-

Adding new DOE study.

-

Define test models.

-

Select responses for the DOE study:

- There is only one response in the present study - make sure to select the response.

- Click Next to go to Specifications.

-

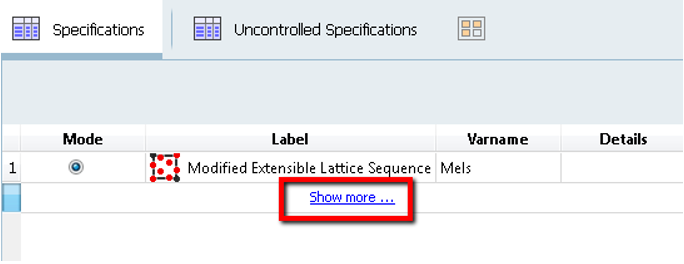

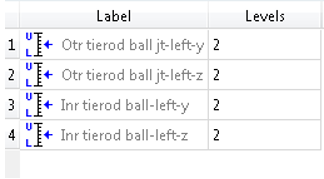

Specifications for the DOE study:

The design space for the DOE study is created in this step. The present study has four design variables with two levels each. A full factorial will give 24 = 16 experiments, as the number of experiments are less. We will do a full factorial run.

- Click Apply to generate the design space.

- Click Next to go to Evaluate.

-

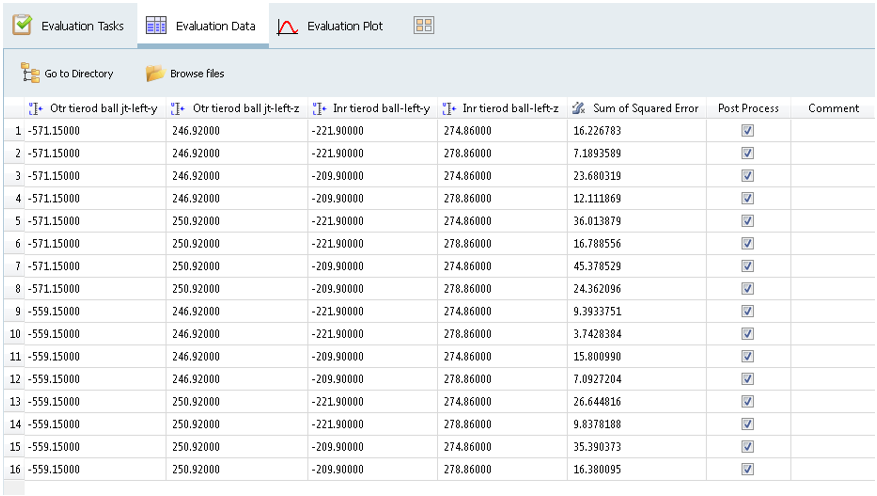

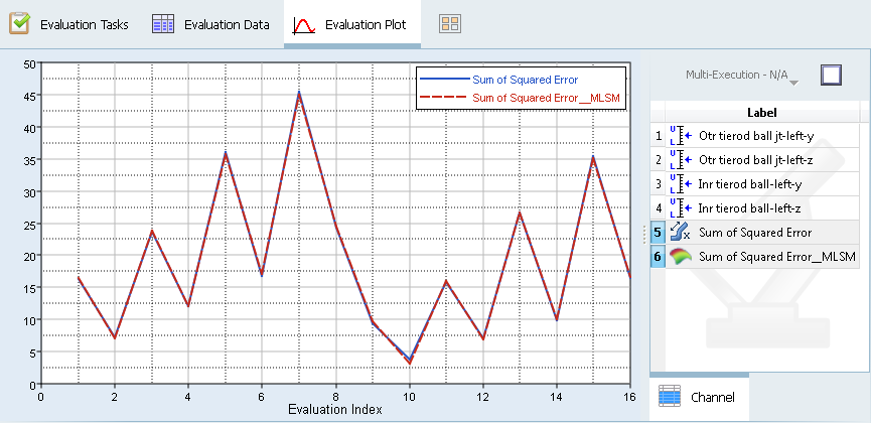

DOE run:

The Tasks tab of Evaluate shows a table of 16 rows and four columns. Column 1 shows the experiment number while other columns corresponding to each experiment get updated with the experiment status of failure or success in the three stages of model execution: Write, Execute and Extract.

Design variable values used under each experiment can be seen under the Evaluation Data tab.

The last column corresponds to the response value from each run. The values gets populated once the run is completed.

-

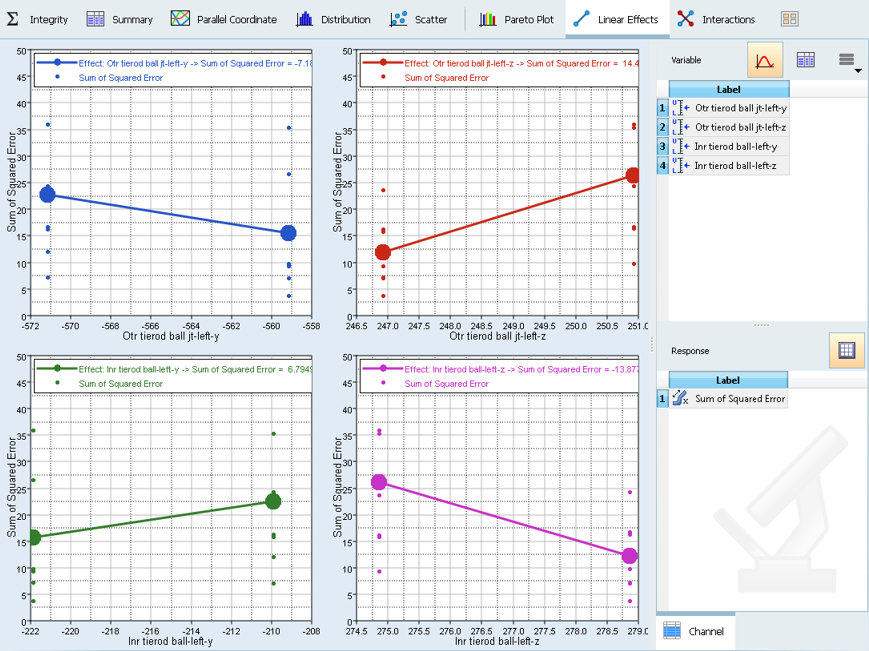

Viewing Main Effect and Interaction plots:

The post-processing section has variety of utilities to helps user to effectively post process results. Summary tab of Post processing page will provide a summary of design along with responses.

The New Generation HyperStudy allows you to sort data by right-clicking on the column heading and selecting the options from context menu.

The options to post-process are available in other tabs. The main effects can be plotted by selecting the Linear Effects tab.

-

Main Effects

-

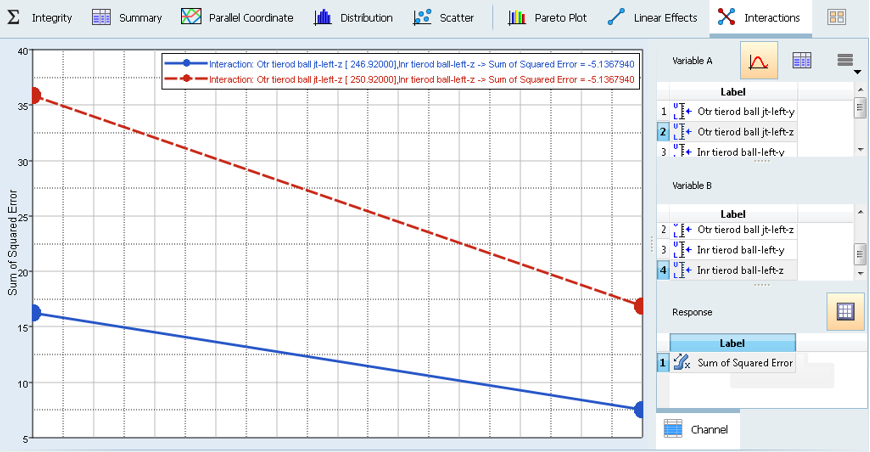

Interactions

An interaction is the failure of one variable to produce the same effect on the output response at different levels of another input variable. In other words, the strength or the sign (direction) of an effect is different depending on the value (level) of some other variable(s). An interaction can be either positive or negative.

Interactions can be plotted from the Interactions tab following the above procedure.

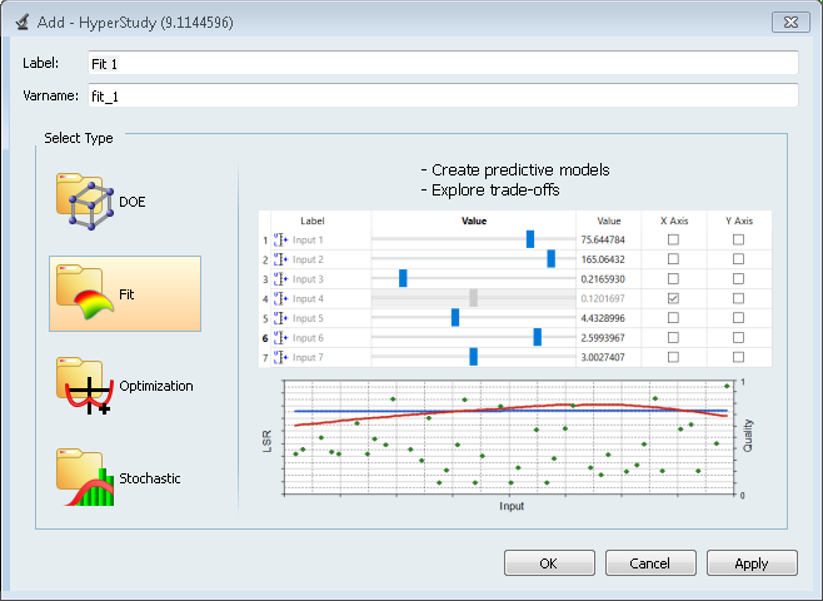

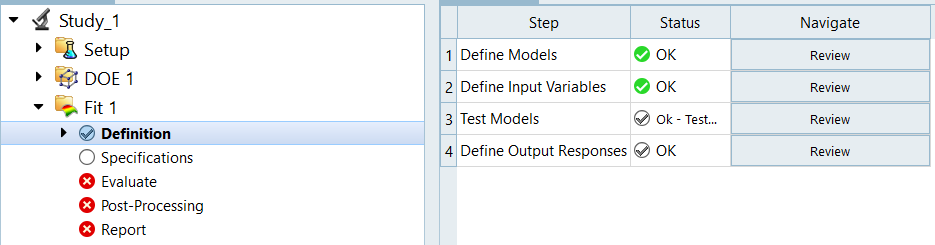

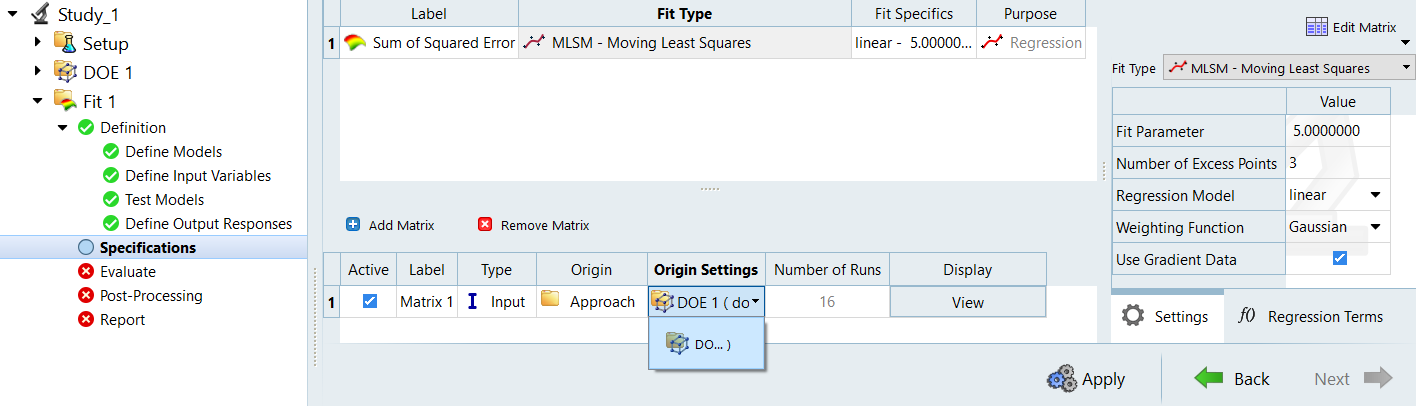

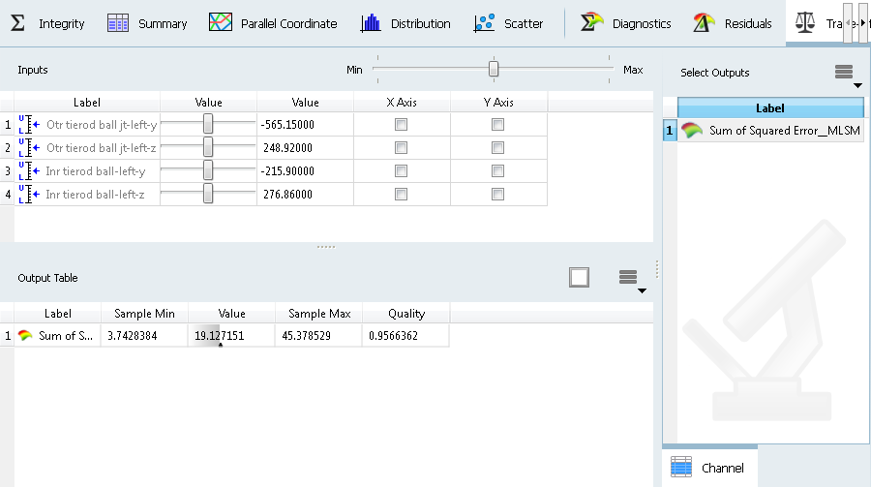

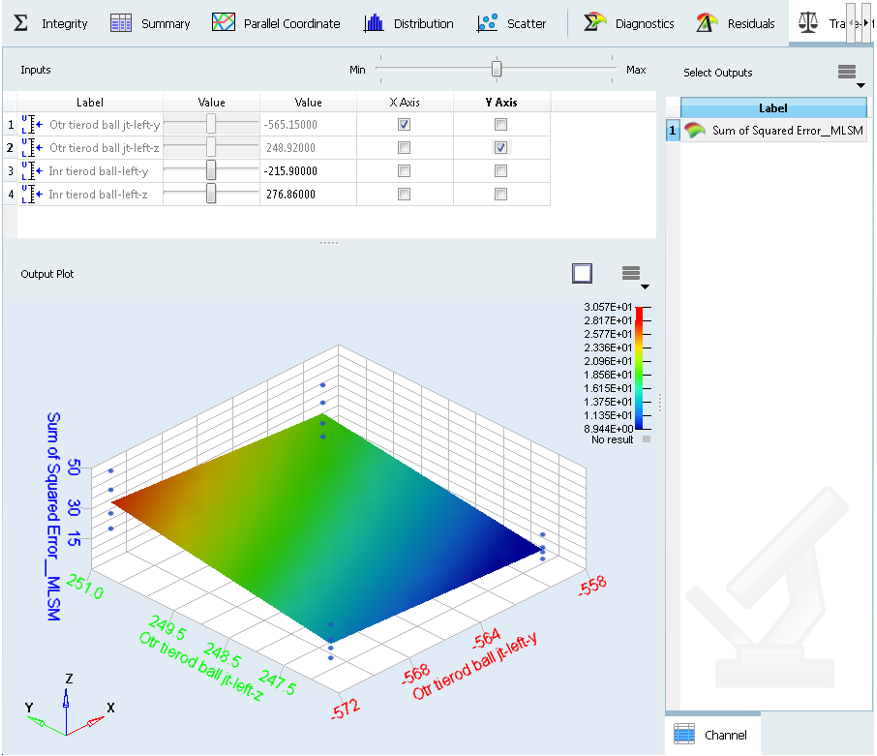

Step 3: Approximation

System response is approximated by using various curve fitting methods. An approximation for the response with the design variables variation is calculated using the data from above DOE study. The accuracy of the approximation can be checked and improved.

-

Adding an approximation.

-

Define specifications.

to open the Add Model dialog.

to open the Add Model dialog.

.

.